The convergence of generative artificial intelligence, high-reach podcasting, and political figureheads has created a new friction point in the digital information economy. When Donald Trump claimed that an AI-generated image depicted him as a doctor—an image Joe Rogan identified as depicting Jesus Christ—the resulting viral moment exposed more than a simple visual misidentification. It revealed the structural vulnerabilities of the modern information cycle, where the gap between AI intent and human perception becomes a tool for both political branding and cultural satire.

To analyze this interaction, one must understand the three distinct layers of the event: the prompt engineering behind the image, the cognitive dissonance in political self-perception, and the role of the long-form podcast as a primary filter for mass-media narratives.

The Semantic Gap in Generative AI

Artificial intelligence does not understand human symbols; it predicts pixel arrangements based on statistical probability. When a user prompts a model for an image of a "savior" or a "healer," the model draws from a training dataset where visual markers for "doctor" and "divine figure" frequently overlap in terms of light, posture, and societal reverence.

The image in question featured a white-robed figure, a common trope in Western religious iconography. The discrepancy between Trump’s interpretation (medical professional) and Rogan’s (religious figure) highlights a failure in semantic alignment. This creates a feedback loop where:

- Prompt Ambiguity: The original creator likely used "heroic" or "sacred" keywords.

- Visual Archetyping: The AI defaulted to the most statistically significant representation of those terms—Jesus.

- Subjective Projection: Trump projected a professional identity (doctor) onto a visual archetype that the general public recognizes as a religious one.

This misalignment is not a technical glitch but a fundamental property of how humans interact with black-box algorithms. We see what we are incentivized to see. For a political figure, the "doctor" framing suggests utility and competence; for a satirist or commentator like Rogan, the "Jesus" framing suggests an absurd level of self-deification.

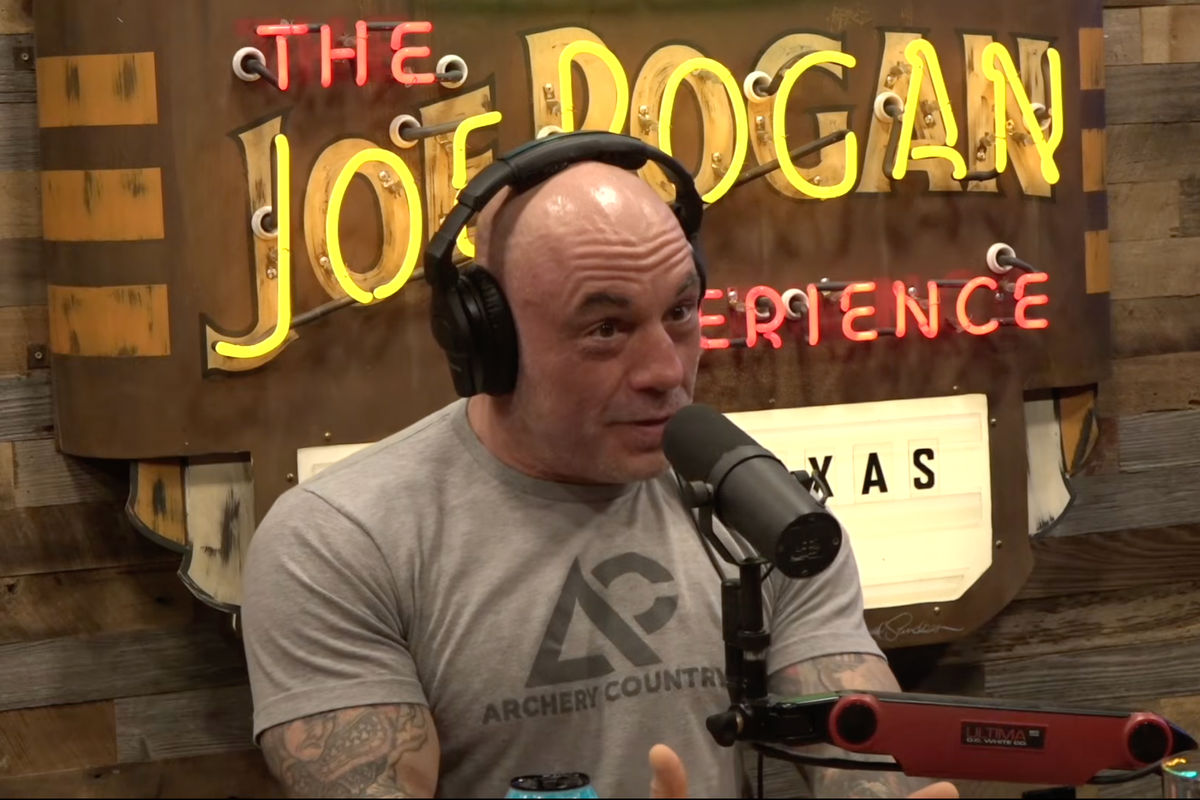

The Podcast as a Mechanism for Narrative Deconstruction

The Joe Rogan Experience serves as a high-bandwidth channel where traditional media guardrails are absent. In this environment, the "laughter" is not just entertainment; it is an analytical tool. When Rogan mocks the misidentification of the image, he is performing a real-time audit of a political claim.

This specific interaction functions through a mechanism of social proof. Because Rogan operates at the intersection of various subcultures—MMA, comedy, and tech—his reaction signals to a broad audience that the political narrative has deviated too far from objective reality. The laughter acts as a corrective force, deflating the gravity of the image and reclassifying it from "political propaganda" to "digital absurdity."

Cognitive Anchoring in Political Branding

The insistence that a robed figure is a doctor rather than a religious icon is a classic example of cognitive anchoring. In political strategy, the objective is to associate the candidate with specific virtues—in this case, healing or scientific authority.

When presented with an ambiguous visual stimulus (the AI image), the candidate’s internal branding strategy overrides the most obvious external interpretation. This creates a "Reality Gap" that can be quantified by the distance between the intended message and the audience's reception.

- Internal Anchor: The candidate views themselves as a fixer or healer of national issues.

- External Anchor: The public recognizes the long hair, white robes, and lighting as 21st-century digital religious kitsch.

- Resultant Friction: A breakdown in communication that leads to ridicule rather than persuasion.

This friction is amplified by the speed at which AI content is generated. Traditional political ads go through weeks of focus grouping to ensure the semiotics are correct. AI-generated content is often deployed instantly, bypassing these quality controls and leading to the "Jesus-Doctor" paradox where the candidate and the audience are looking at the same pixels but seeing two different realities.

The Economic Value of Viral Absurdity

From an attention economy perspective, the inaccuracy of the image is more valuable than a high-fidelity, accurate depiction. The viral nature of Rogan’s laughter creates a secondary market for the content.

- The Original Asset: The AI image itself.

- The Derivative Content: The video clip of the laughter.

- The Meta-Commentary: Articles and social media threads analyzing the reaction.

Each layer increases the "Total Reach" of the event. In this model, the fact that the image was "wrong" is the primary driver of its success. Had the image been a boring, accurate depiction of a doctor, it would never have reached Rogan’s desk. The error is the engine of the engagement.

Technical Limitations of AI Truth-Telling

The incident underscores a critical limitation in current LLM and image generation deployments: they lack "World Logic." An AI can generate a person in a lab coat, but it can also generate a person in a robe with a stethoscope. If the training data contains enough memes or satirical images where religious figures are depicted in medical settings, the boundaries between these categories blur.

This leads to a "Hallucination of Context." The AI didn't necessarily make a mistake; it provided a visual synthesis of two disparate concepts. The human observer—the candidate—then applied a specific, biased context to that synthesis. This reveals a broader truth about the future of AI in politics: it will be used less for factual representation and more as a Rorschach test for the candidate's own ego and the public's skepticism.

Strategic Realignment for Digital Communication

For organizations or figures navigating this space, the "Jesus-Doctor" incident provides a clear roadmap for avoiding narrative collapse.

- Semiotics Auditing: Before any AI-generated asset is shared, it must be audited by a human for "Iconographic Leakage"—the tendency for AI to insert religious or historical archetypes into secular prompts.

- Contextual De-risking: Recognizing that long-form media (podcasts) will naturally find and exploit the most absurd interpretation of a visual asset.

- The Irony Hedge: If a figure shares an AI image, they must lean into the technological uncertainty of the medium rather than asserting a single, rigid interpretation.

The failure here was not the AI's inability to draw a doctor; it was the candidate's inability to recognize that the AI had drawn a messiah. In the age of algorithmic content, the person who controls the interpretation wins the narrative. By laughing, Rogan seized control of the interpretation, turning a branding attempt into a case study in digital delusion.

The operational reality is that AI-generated media is currently too unstable for unironic political messaging. The models are biased toward the dramatic, the iconic, and the exaggerated. When these traits meet a political ego that is equally prone to exaggeration, the result is a catastrophic failure of messaging. The "Jesus-Doctor" image will not be the last time a candidate misreads an algorithm; it is simply the most public data point in an ongoing trend of AI-driven narrative fragmentation.

The final strategic pivot must be a shift from "AI for Creation" to "AI for Verification." Until candidates use these tools to stress-test their own public images rather than just generating them, they will continue to provide the raw material for their own public deconstruction on platforms that prioritize truth-through-ridicule over scripted talking points.